Blogs, News & Articles

Why Fintechs and Banks are Investing Heavily in KYC Automation in 2026

The financial industry is entering a new phase of digital transformation where speed, security, and compliance must work together seamlessly. In 2026, fintech companies and banks are investing aggressively in KYC (Know Your Customer) automation to address rising fraud risks, growing customer expectations, and increasingly complex regulatory requirements.

Traditional KYC processes that once relied heavily on manual verification are no longer sufficient for modern financial ecosystems. Customers expect instant onboarding, regulators demand stronger compliance, and businesses need scalable systems capable of handling thousands of verifications daily. KYC automation has become a strategic necessity rather than just an operational upgrade.

The Rising Pressure on Financial Institutions

Banks and fintech firms today face a difficult balancing act. On one side, they must onboard customers quickly to remain competitive. On the other, they must maintain strict compliance with anti-money laundering (AML) regulations and fraud prevention standards.

Manual KYC workflows often create major bottlenecks:

- Delayed customer onboarding

- High operational costs

- Human verification errors

- Increased compliance risks

- Poor customer experience

- Difficulty scaling during growth periods

For digital-first fintech companies, even a small delay in onboarding can lead to customer drop-offs. In highly competitive markets, users rarely wait days for account approval when another platform can complete onboarding within minutes.

This is where KYC automation is changing the landscape.

Faster Customer Onboarding is Driving Adoption

One of the biggest reasons financial institutions are investing in KYC automation is speed.

AI-powered verification systems can automatically extract, validate, and process customer documents in real time. Technologies such as OCR (Optical Character Recognition), facial matching, liveness detection, and intelligent document processing significantly reduce manual intervention.

Instead of waiting hours or days for verification, customers can now complete onboarding within minutes.

For banks and fintech firms, this means:

- Higher conversion rates

- Reduced onboarding abandonment

- Faster account activation

- Improved customer satisfaction

- Lower operational workload

In an era where digital experience determines customer loyalty, onboarding speed has become a competitive differentiator.

Fraud Detection Has Become More Complex

Fraud techniques have evolved dramatically over the last few years. Financial institutions are now dealing with:

- Synthetic identities

- AI-generated fake documents

- Deepfake facial manipulation

- Identity theft

- Cross-border financial fraud

Traditional manual review teams often struggle to detect sophisticated fraudulent patterns at scale.

Modern KYC automation platforms use AI and machine learning to identify anomalies, flag suspicious behaviors, and validate document authenticity more accurately than manual processes alone.

Automated systems can compare data across multiple checkpoints simultaneously, including:

- Government-issued ID verification

- Biometric authentication

- Database cross-checks

- Device intelligence

- Behavioral analysis

This multi-layered approach significantly strengthens fraud prevention capabilities.

Regulatory Compliance is Becoming More Demanding

Global regulatory frameworks are becoming stricter every year. Financial institutions must comply with evolving AML, data privacy, and identity verification regulations across multiple jurisdictions.

Manual compliance processes create risks because they depend heavily on human consistency. Even minor verification mistakes can result in penalties, audits, reputational damage, or regulatory scrutiny.

KYC automation helps institutions standardize compliance workflows by:

- Creating audit-ready verification trails

- Reducing inconsistencies

- Ensuring policy-based validation

- Automating risk scoring

- Maintaining centralized compliance records

Automation also enables organizations to adapt more quickly when regulations change.

Scalability is Critical for Growth

Fintech platforms often experience rapid growth phases where customer verification volumes increase dramatically within short periods.

Manual verification teams cannot scale efficiently during such spikes. Hiring and training large compliance teams is expensive and time-consuming.

Automated KYC systems provide scalability without proportional increases in operational costs. Whether onboarding hundreds or millions of customers, automation ensures consistent processing speed and accuracy.

This scalability is especially important for:

- Digital banks

- Lending platforms

- Cryptocurrency exchanges

- Insurance providers

- Cross-border payment companies

- Investment platforms

AI is Transforming KYC from Reactive to Predictive

Another major shift in 2026 is the evolution of KYC from reactive verification to predictive risk intelligence.

Advanced AI systems are no longer limited to document validation. They now analyze patterns, behaviors, and transaction signals to identify potential risks proactively.

Predictive KYC systems can help organizations:

- Detect suspicious activity earlier

- Prioritize high-risk profiles

- Reduce false positives

- Improve decision-making

- Enhance operational efficiency

This intelligence-driven approach allows compliance teams to focus on strategic risk management rather than repetitive manual tasks.

Cost Reduction is a Major Business Driver

Operational efficiency remains a major factor behind KYC automation investments.

Manual KYC processes involve significant costs related to:

- Staffing

- Training

- Document handling

- Rework

- Error correction

- Compliance management

Automation reduces these expenses while improving processing speed and accuracy.

Many financial institutions are now viewing KYC automation not merely as a compliance investment, but as a long-term profitability and efficiency strategy.

Customer Experience is Now Central to Compliance

Historically, compliance processes were viewed as necessary friction. In 2026, leading fintech firms are proving that strong compliance and excellent customer experience can coexist.

Modern KYC automation solutions offer:

- Mobile-friendly verification

- Real-time document capture

- Seamless biometric authentication

- Faster approvals

- Reduced paperwork

This creates smoother customer journeys while maintaining regulatory integrity.

The institutions winning in 2026 are those that can combine security with simplicity.

The Future of KYC Automation

The future of KYC automation is moving toward fully intelligent onboarding ecosystems powered by AI, automation, and continuous monitoring.

Emerging technologies such as:

- Agentic AI

- Real-time risk intelligence

- Continuous identity monitoring

- AI-powered fraud analytics

- Blockchain-based identity systems

will further redefine how financial institutions manage trust and compliance.

As digital banking ecosystems continue to expand, KYC automation will remain at the center of secure and scalable financial operations.

------------------------------------------------------------------------

The heavy investment in KYC automation by fintechs and banks in 2026 is driven by a simple reality: manual processes can no longer support the speed, scale, and security demands of modern finance.

Financial institutions need faster onboarding, stronger fraud prevention, scalable compliance, and improved customer experiences — all while managing rising regulatory complexity.

AI-powered KYC automation is helping organizations achieve these goals by transforming verification from a slow, reactive process into an intelligent, scalable, and strategic business function.

Businesses that embrace automated KYC today are positioning themselves for stronger growth, lower operational risk, and greater customer trust in the digital financial era.

Source:

BDO USA: https://www.bdo.com/insights/industries/fintech/2026-fintech-industry-predictions

Business Standard: https://www.business-standard.com/companies/start-ups/india-fintech-ai-adoption-fraud-kyc-lending-compliance-126052100279_1.html

Retail Banker International: https://www.retailbankerinternational.com/features/industry-leaders-give-their-take-on-year-ahead/

OCR Alone Is Not Enough: Why QA Needs Context-Aware AI

For years, Optical Character Recognition (OCR) has been the foundation of document digitization in manufacturing, construction, pharma, and industrial operations. It helped organizations move away from paper-heavy workflows by converting scanned documents into machine-readable text.

But modern Quality Assurance (QA) demands far more than text extraction.

Today’s QA teams are expected to validate complex compliance documents, detect inconsistencies across specifications, and ensure traceability across thousands of records—all while operating under tighter timelines and stricter regulations.

This is where traditional OCR begins to show its limitations.

The next phase of QA automation is being shaped not by OCR alone, but by context-aware AI.

The Problem with Traditional OCR in QA Workflows

OCR was designed to recognize characters and convert images into text. While this works reasonably well for standardized documents, QA environments are rarely simple or uniform.

A typical QA workflow may involve:

- Material Test Reports (MTRs)

- Certificates of Analysis (COAs)

- Inspection reports

- Engineering specifications

- Compliance documents from multiple suppliers

These documents vary significantly in:

- Layouts and formats

- Terminology

- Tables and handwritten notes

- Standards and compliance structures

OCR can extract the text, but it often fails to understand:

- What the text means

- Whether values are within acceptable tolerance levels

- If a requirement is missing

- Whether two related documents contradict each other

This creates a dangerous gap between digitization and intelligent validation.

Why QA Requires Context, Not Just Extraction

Quality Assurance is fundamentally about interpretation.

For example:

- A chemical composition value may appear correctly extracted—but exceed ASTM limits

- A heat number may exist—but not match the corresponding batch record

- A specification clause may reference a testing requirement hidden elsewhere in the document set

OCR cannot identify these contextual relationships because it lacks domain understanding.

Context-aware AI changes this by combining:

- Natural Language Processing (NLP)

- Machine Learning

- Rule-based validation

- Domain-trained intelligence

Instead of simply reading documents, the system understands:

- Relationships between fields

- Industry-specific terminology

- Standards and tolerances

- Cross-document dependencies

How Context-Aware AI Improves QA Operations

1. Intelligent Validation

Modern AI systems can validate extracted information against:

- Industry standards

- Internal quality thresholds

- Historical records

For example, if an MTR contains a tensile strength value outside permissible ranges, the AI can automatically flag it for review.

This reduces the risk of:

- Compliance failures

- Shipment delays

- Production defects

2. Cross-Document Correlation

QA decisions rarely rely on a single document.

A context-aware AI platform can connect:

- COAs with supplier records

- MTRs with inspection reports

- Drawings with specifications

This creates a unified understanding of quality data rather than isolated document processing.

3. Detection of Missing or Inconsistent Data

One of the biggest operational risks is missing information.

AI can identify:

- Absent compliance clauses

- Missing test parameters

- Incomplete certificates

- Conflicting values across documents

This significantly improves audit readiness and reduces manual review effort.

4. Faster Processing at Scale

As organizations grow, manual QA reviews become difficult to scale.

Context-aware AI enables teams to process:

- Thousands of quality documents

- Multiple supplier formats

- Large project datasets

Without proportionally increasing manpower.

This allows QA teams to focus on:

- Decision-making

- Risk assessment

- Supplier quality improvement

Instead of repetitive document checking.

How Industries Are Moving Beyond OCR

Manufacturing and construction companies are increasingly realizing that OCR alone cannot support modern operational complexity.

In sectors such as:

- Steel and metals

- Pharma manufacturing

- EPC and construction

- Chemicals and industrial products

Organizations are adopting AI-driven QA systems that deliver:

- Structured intelligence

- Automated validation

- Real-time quality insights

This shift is turning QA from a reactive compliance function into a strategic operational capability.

Where Context-Aware AI Creates Competitive Advantage

The impact extends beyond efficiency.

Organizations using intelligent QA automation are seeing:

- Faster approvals

- Reduced rework

- Improved supplier accountability

- Stronger compliance outcomes

- Better operational visibility

More importantly, they are reducing the hidden costs associated with:

- Manual verification

- Human oversight errors

- Delayed quality decisions

How Star Software Approaches QA Automation

Solutions like those developed by Star Software reflect this shift toward intelligent QA.

Rather than relying solely on OCR, Star Software’s AI-powered approach focuses on:

- Understanding document context

- Mapping relationships between data points

- Validating information against business and industry rules

- Processing complex QA documents at scale

This enables organizations to move from basic document digitization to actionable quality intelligence.

The Future of QA Is Intelligent

The volume and complexity of industrial documents will only continue to grow.

Organizations that continue relying solely on OCR may digitize their paperwork—but they will still struggle with:

- Validation

- Interpretation

- Risk detection

- Decision-making

The future belongs to systems that can understand context, identify relationships, and support intelligent actions.

Because in Quality Assurance, reading text is only the beginning.

Understanding what it means is what truly matters.

How Intelligent Document Processing is Redefining Quality Assurance in Manufacturing

Quality assurance (QA) in manufacturing has always been document-heavy—inspection reports, certificates of analysis (CoA), mill test reports (MTRs), supplier declarations, and compliance records. For decades, these documents have been manually reviewed, verified, and archived, creating bottlenecks that slow down operations and introduce risk.

Today, Intelligent Document Processing (IDP) is transforming this landscape—turning QA from a reactive, manual function into a proactive, data-driven system.

The Legacy QA Challenge: Too Many Documents, Too Little Intelligence

A typical QA workflow involves:

- Reviewing supplier documents for compliance

- Cross-checking inspection data against specifications

- Validating heat numbers, batch IDs, and material grades

- Ensuring documentation is audit-ready

However, most organizations still struggle with:

- Manual data entry and verification

- Inconsistent document formats across vendors

- Delayed quality checks due to processing backlogs

- Human errors leading to compliance risks

In high-volume environments, even small inaccuracies can escalate into production delays, rejected shipments, or regulatory penalties.

What is Intelligent Document Processing (IDP)?

IDP combines:

- Optical Character Recognition (OCR)

- Artificial Intelligence (AI)

- Machine Learning (ML)

- Natural Language Processing (NLP)

to extract, interpret, and validate data from structured and unstructured documents.

But in QA, IDP goes beyond extraction—it enables contextual understanding and decision support.

How IDP is Transforming Quality Assurance

1. Automated Data Extraction from Complex QA Documents

IDP systems automatically capture critical data points such as:

- Material grades

- Heat numbers and batch IDs

- Mechanical and chemical properties

- Supplier details

Unlike traditional OCR, modern IDP understands document context, reducing misinterpretation of fields.

2. Intelligent Validation and Cross-Verification

One of the biggest QA challenges is ensuring consistency across documents.

IDP enables:

- Cross-checking MTR data with purchase specifications

- Matching packing slips with inspection reports

- Validating CoA values against acceptable thresholds

This creates a multi-layer validation system, significantly reducing manual intervention.

3. Real-Time Error Detection and Anomaly Identification

IDP platforms can:

- Detect missing or inconsistent fields

- Flag abnormal values in test results

- Identify mismatches in heat numbers or batch IDs

Instead of discovering errors during audits, manufacturers can now catch them in real time.

4. Standardization Across Vendor Documents

Suppliers often use different formats, terminologies, and layouts.

IDP solves this by:

- Converting diverse document formats into standardized data models

- Structuring data using formats like JSON

- Applying consistent validation rules across vendors

The result: uniform, comparable, and reliable QA data.

5. Faster Audits and Compliance Readiness

With IDP:

- Documents are digitized and indexed automatically

- Audit trails are maintained with timestamps and user logs

- Data is searchable and instantly accessible

This ensures organizations are always audit-ready, not scrambling during inspections.

6. Enabling Predictive Quality Assurance

Perhaps the biggest shift is from reactive QA to predictive QA.

By analyzing extracted data over time, IDP systems can:

- Identify recurring defects from specific suppliers

- Detect trends in material quality

- Predict potential failures before they occur

This transforms QA into a strategic function, not just a compliance requirement.

Real-World Impact: What Manufacturers Are Seeing

Organizations adopting IDP in QA report:

- 📉 Up to 80–90% reduction in manual document processing effort

- ⚡ Faster turnaround in quality verification cycles

- ✅ Improved accuracy and reduced compliance risks

- 📊 Better visibility into supplier and material performance

More importantly, QA teams can now focus on decision-making rather than data entry.

Where Solutions Like Star Software Fit In

Platforms like Star Software bring together:

- AI-powered extraction

- Context-aware validation

- Custom workflows for industry-specific QA needs

This allows manufacturers to build scalable, intelligent QA ecosystems that adapt to real-world variability—whether it’s inconsistent supplier formats or complex certification requirements.

Quality assurance has long been constrained by documentation complexity. Intelligent Document Processing removes that constraint.

By turning documents into structured, actionable data, IDP is not just improving QA—it is redefining it.

And in an industry where precision defines reputation, that shift is both timely and necessary.

How Star Software Ensures 100% Traceability from Packing Slips to MTRs

In metals, chemicals, and manufacturing, traceability isn’t just a compliance requirement—it’s a business imperative. Solutions like MTR traceability automation can help ensure that a single mismatch between a packing slip and a Mill Test Report (MTR) does not lead to rejected shipments, compliance risks, or even safety issues.

Yet, most organizations still rely on fragmented processes—manual data entry, disconnected systems, and inconsistent document formats.

This is where Star Software’s AI-powered document intelligence platform fundamentally changes the game.

The Traceability Problem: Where Things Break

In a typical workflow:

- Packing slips arrive at irregular intervals

- MTRs follow different formats depending on vendors

- Critical fields like heat number, part number, and quantity must match exactly

But in reality:

- Identification codes are misread as heat numbers

- Vendor-specific formats create inconsistencies

- Manual mapping leads to human error

Even a 1–2% mismatch rate can translate into significant operational and financial losses at scale.

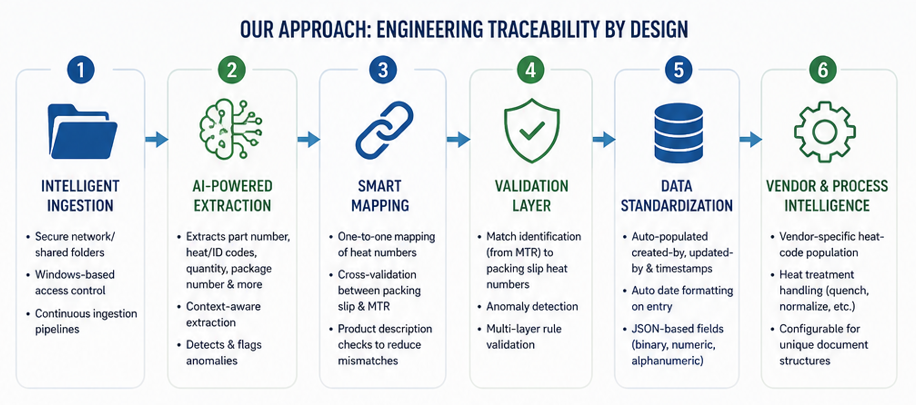

Star Software’s Approach: Engineering Traceability by Design

Instead of treating traceability as a downstream validation step, Star Software embeds it directly into the data pipeline.

1. Intelligent Document Ingestion

Documents are automatically ingested through:

- Secure network/shared folders

- Controlled user access (Windows-based authentication)

- Continuous ingestion pipelines

This ensures no document is missed, even when packing slips arrive months apart.

2. AI-Powered Field Extraction with Context Awareness

The platform extracts key fields such as:

- Part number

- Heat/identification codes

- Quantity

- Package number

- Product description

But what sets it apart is context-aware extraction.

For example:

- The system distinguishes between identification codes and heat numbers

- It flags anomalies where labels are misinterpreted

- It continuously learns from edge cases (like legacy PDF formats)

This directly addresses real-world issues like misclassification errors observed during parsing.

3. Smart Field Mapping Between Packing Slips and MTRs

Traceability depends on accurate mapping—not just extraction.

Star Software ensures:

- One-to-one mapping of heat numbers across documents

- Cross-validation between packing slip data and MTR fields

- Product description checks to reduce false matches

This multi-layer validation creates a closed-loop traceability system, not just a data capture tool.

4. Automated Data Population & Standardization

To eliminate manual inconsistencies:

- Fields like created-by, updated-by, and timestamps are auto-populated via SQL

- Date formats are standardized at the database level

- Data types (binary, numeric, alphanumeric) are enforced through structured schemas (JSON-based)

The result:

Clean, audit-ready data from the moment of entry5. Vendor-Specific Logic Handling

Not all suppliers follow the same rules.

Star Software incorporates:

- Vendor-specific heat-code mapping (e.g., custom logic for different suppliers)

- Heat-treatment workflows (quench, normalize, etc.)

- Configurable rules for unique document structures

This ensures traceability even in highly heterogeneous supply chains.

6. Continuous Learning with Real-World Variability

A major challenge in automation is variability:

- Old vs new document layouts

- Inconsistent labeling conventions

- Scanned vs digital PDFs

Star Software addresses this by:

- Training models on diverse sample sets

- Continuously validating against historical documents

- Refining extraction logic with each iteration

This makes the system adaptive, not static.

The Business Impact: Beyond Compliance

Organizations implementing this approach typically see:

- Up to 90% reduction in manual verification effort

- Faster document processing cycles

- Near-zero mismatch rates in traceability

- Improved audit readiness and compliance confidence

More importantly, it builds trust across the supply chain—from suppliers to end customers.

Traceability is often treated as a documentation problem. In reality, it’s a data architecture problem.

And in industries where precision is non-negotiable, that’s not just an advantage—it’s essential.

COA Fraud Detection Checklist

A Certificate of Analysis (COA) is a critical quality document confirming that a product meets defined specifications before release.

However, with the rise of counterfeit and substandard products, COA fraud has become a serious risk across pharma, chemicals, and metals.

Why this matters

- Counterfeit pharmaceuticals alone represent a $200B+ global problem (Source: Wikipedia)

- In some developing markets, over 30% of medicines may be fake

- Fake or manipulated documentation (including COAs) is a key enabler of such fraud

This makes COA validation not just a compliance task, but a risk management function.

A Structured Checklist on COA Fraud:

Checkpoint Category Fraud Indicator What to Verify Risk Level Industry Insight / Data Point Document Authenticity Missing or inconsistent certificate number Verify unique COA ID across batches High Fake documentation often lacks traceable IDs No authorized signature or digital validation Check signer credentials and audit trail High COA approval is mandatory before product release (sec.gov) Altered or scanned-looking signatures Compare with known authorized signatories Medium Forged approvals are a common fraud pattern Supplier Verification Unknown or unverified lab issuing COA Cross-check lab accreditation High Weak regulatory systems increase counterfeit risks (Wikipedia) Mismatch between supplier and testing lab Validate third-party lab relationship High Fraud often occurs via fake third-party labs Data Integrity Identical test results across multiple batches Check for data duplication patterns High Repetition suggests fabricated or copied data Values too “perfect” (no variance) Compare with historical batch variation Medium Real-world manufacturing always shows variation Missing test parameters Ensure all required specs are present High COA must include all defined test procedures (ghsupplychain.org) Product-Level Validation Batch number mismatch Cross-check with shipment and invoice High Fraud often involves relabeling expired or fake goods Expiry dates overwritten or inconsistent Validate against production records High Fake drugs often carry incorrect expiry info (Wikipedia) Compliance Check Non-alignment with regulatory standards (FDA, ASTM, ISO) Validate required compliance fields High Regulatory gaps enable counterfeit circulation Missing GMP references Verify manufacturing compliance High Fraud often bypasses GMP documentation Testing & Results Validation Unrealistic purity levels Compare with industry benchmarks Medium Counterfeit products may misrepresent composition No trace of test method (HPLC, GC, etc.) Ensure method transparency High COAs must include validated testing methods (sec.gov) Format & Structure Analysis Inconsistent formatting across COAs Compare with previous supplier documents Medium Fraudsters often replicate formats imperfectly Spelling errors or inconsistent units Check for anomalies Low Red flag for manually created fake documents Digital Verification No QR code / blockchain / digital trace Verify authenticity digitally High Increasing shift toward traceability systems Behavioral Red Flags Supplier reluctance to share raw test data Request supporting lab reports High Lack of transparency often signals fraud Urgency in shipment without validation Apply standard QA workflow Medium Fraud often exploits time pressure Key Patterns Observed in COA Fraud

1. Data Fabrication & Copy-Paste Fraud

- Identical values across batches

- Reused templates with minor edits

Increasingly detectable using AI-based pattern recognition.

2. Counterfeit Product + Fake COA Combination

- Fake drugs or materials paired with convincing documentation

- Often includes incorrect ingredients or no active ingredient at all

3. Third-Party Lab Misrepresentation

- Fake lab names or unaccredited labs

- Misuse of legitimate lab branding

4. Expiry & Relabeling Fraud

- Expired materials reintroduced with altered COAs

- Particularly common in pharma and chemicals

How Leading Companies Are Responding

Modern organizations are moving from manual checks → AI-driven validation:

- Automated extraction of COA fields

- Cross-document validation (COA vs invoice vs batch records)

- Pattern detection (duplicate values, anomalies)

- Supplier risk scoring

This aligns with a broader trend: document intelligence becoming a core compliance layer

COA fraud is no longer a rare compliance issue—it is a systemic supply chain risk tied to:

- Counterfeit products

- Regulatory penalties

- Brand damage

- Patient and customer safety

A structured checklist like the one above helps—but scaling it requires automation.